Automated Performance Testing & Graphics Metrics

Data-driven validation for rendering and runtime decisions

Overview

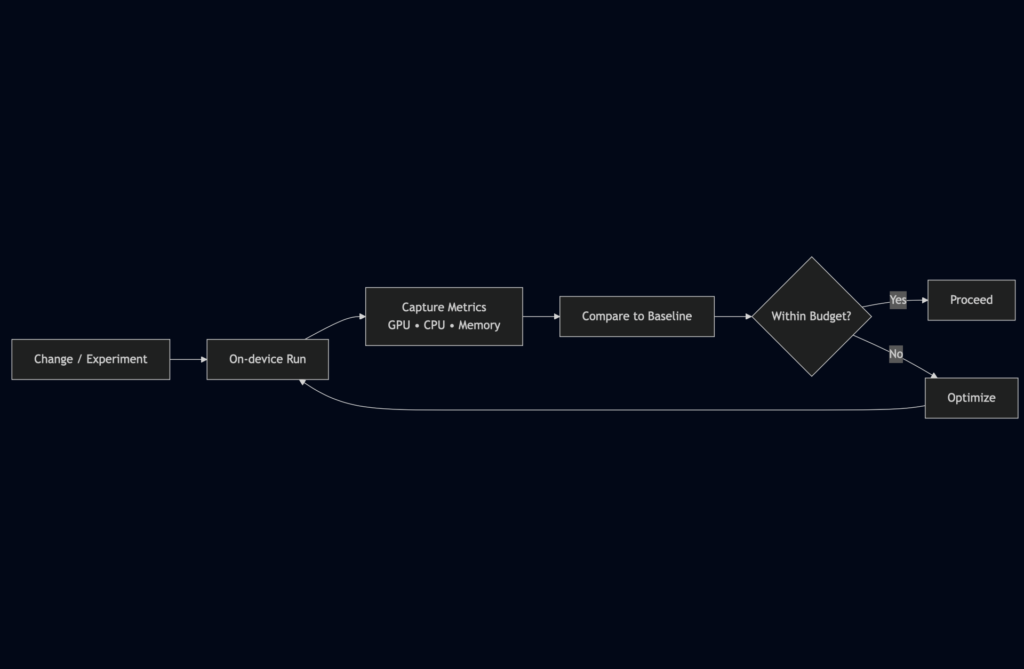

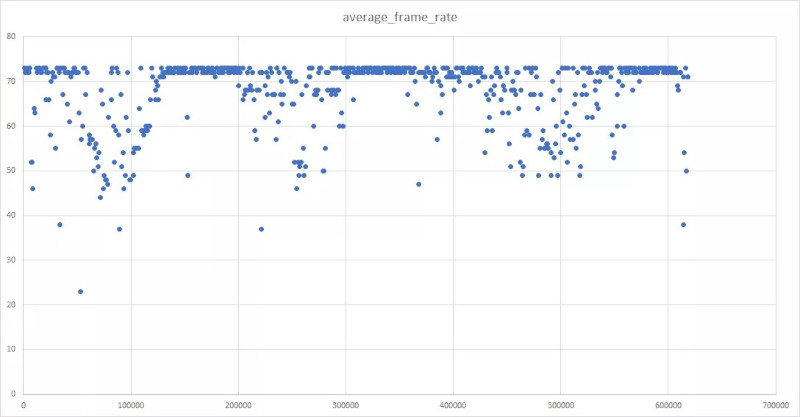

This project focused on building lightweight, automation-adjacent tooling to measure real performance cost and validate rendering decisions. Rather than owning CI infrastructure, I created targeted systems that provided actionable metrics to reduce subjective judgment and inform engineering trade-offs.

Key Contributions

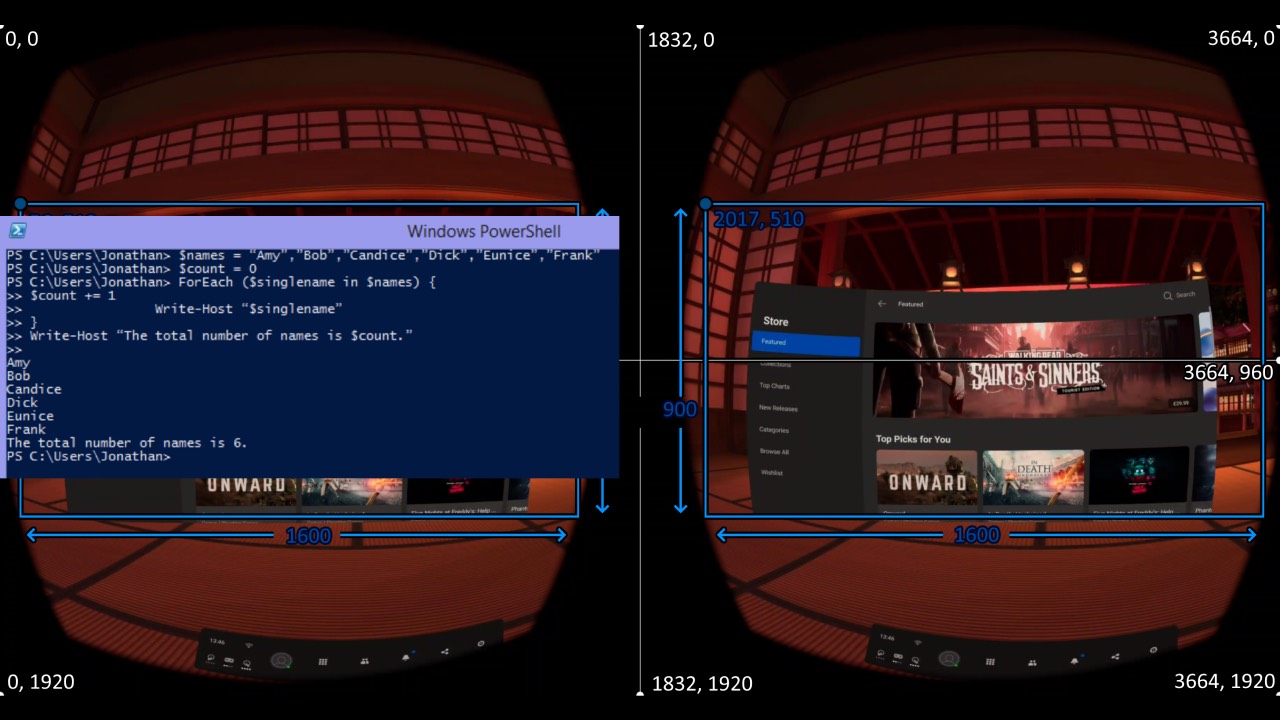

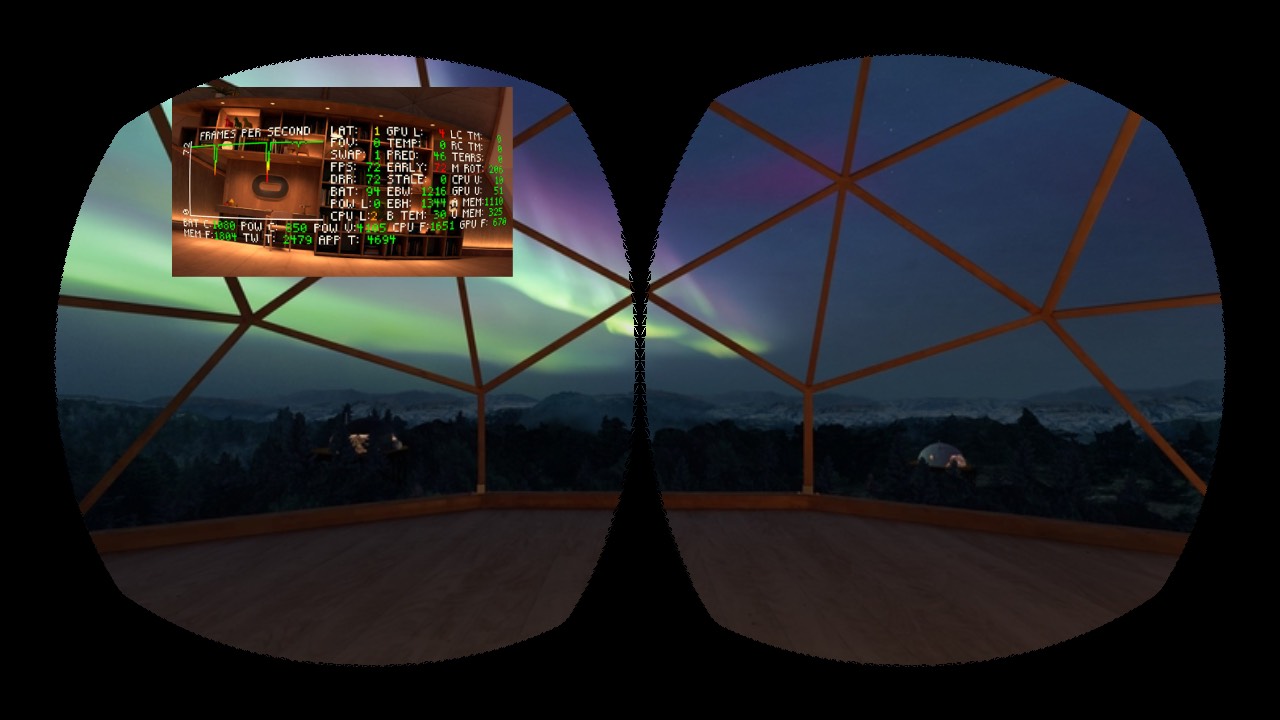

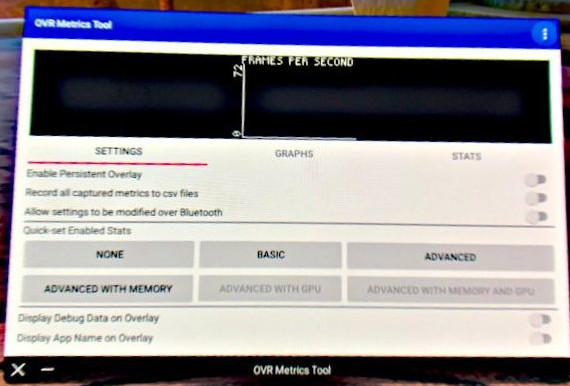

Built Python-based performance test systems to capture GPU and runtime metrics

Profiled shaders, animations, and transitions directly on device

Supplied quantitative data to engineering and leadership to guide decisions

Identified performance regressions early and supported targeted optimizations

Integrated measurement into regular review and iteration workflows

ROLE

Technical Artist

Languages / Scripts

Python, PowerShell, GLSL (profiling context)

FOCUS

Instrumentation • metric capture • validation workflows • decision support

CONTEXT

As visual fidelity increased, subjective evaluation became insufficient. This work introduced repeatable measurement to support informed decisions before and after public release.